NVIDIA Ising: The Open-Source AI Models That Make Quantum Computing Actually Work

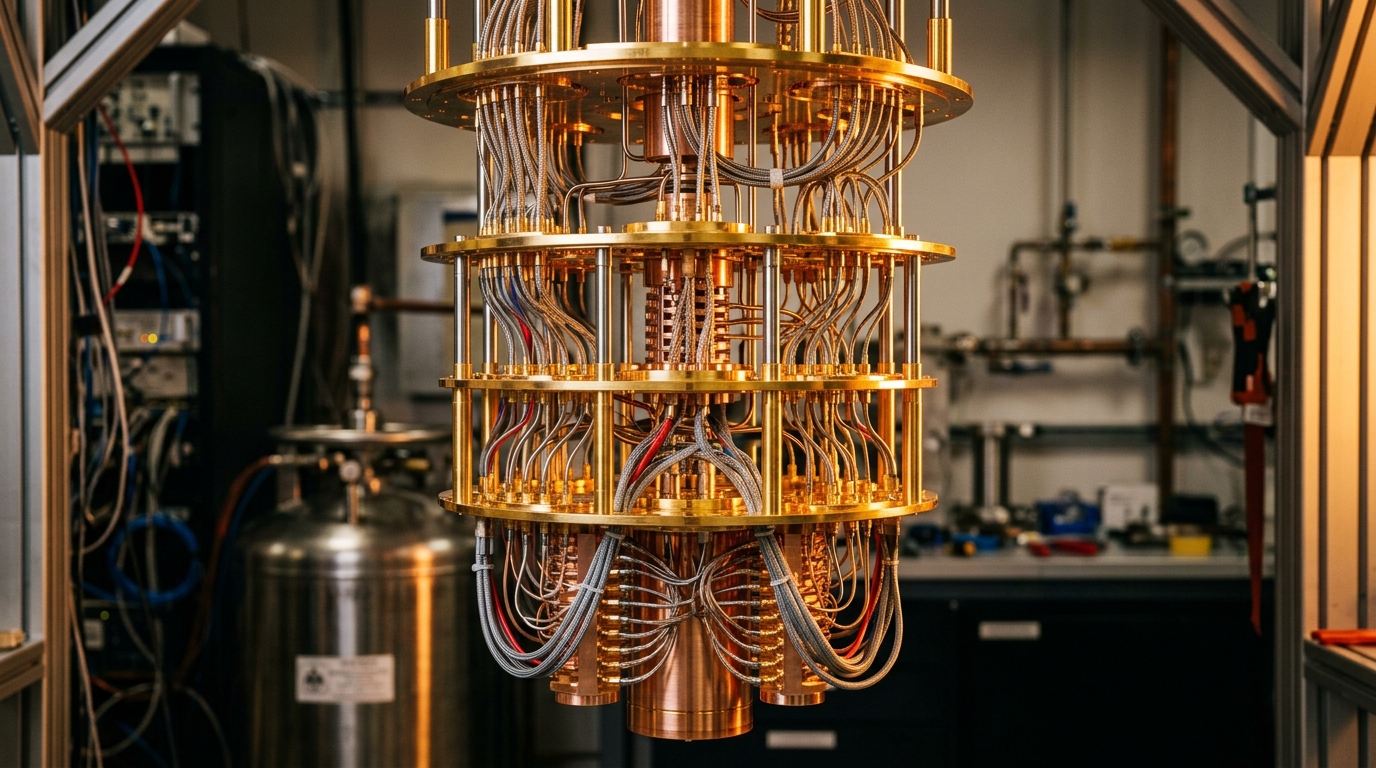

Quantum computing has a dirty secret: the hardware is fragile, the signals are noisy, and even the best machines spend most of their time correcting their own errors. On April 15, 2026, NVIDIA released Ising — a family of open-source AI models aimed squarely at that bottleneck. It is, the company says, the first time anyone has shipped pre-trained, open quantum AI models at this scale. And the benchmark numbers are loud enough to pay attention to.

Ising claims up to 2.5x faster and 3x more accurate quantum error-correction decoding than traditional approaches. If those numbers hold up in third-party testing, the path from "interesting lab device" to "useful fault-tolerant machine" just got meaningfully shorter.

What Ising Actually Is

Ising is not a single model. It is a two-pronged family that tackles the two most painful problems in running a quantum processor: calibrating the hardware and decoding its errors in real time.

The first component, Ising Calibration, is a 35-billion-parameter vision-language model fine-tuned to read experimental measurements from a quantum processing unit (QPU) and infer the adjustments needed to tune it. Paired with an agent, NVIDIA says it can reduce calibration time from days to hours. If you have ever watched a quantum team spend a week hand-tuning microwave pulses, that is not a small promise.

The second component, Ising Decoding, is a pair of 3D convolutional neural networks that handle surface-code quantum error correction. Surface codes are the leading candidate for fault-tolerant quantum computing, and they require constant, fast decoding of measurement data to catch and correct errors before they cascade. NVIDIA shipped two variants:

| Model | Parameters | Receptive Field | Optimized For |

|---|---|---|---|

| Ising-Decoder-SurfaceCode-1-Fast | ~912K | 9 | Latency |

| Ising-Decoder-SurfaceCode-1-Accurate | ~1.79M | 13 | Accuracy |

Both are trained on cubic input volumes (9×9×9 and 13×13×13 respectively) and, because they are convolutional, they can be applied to new decoding volumes without retraining when the surface code distance or the number of rounds changes at inference time. That's a big deal in practice — retraining a decoder every time your lab changes code parameters is an existential tax on iteration speed.

Why Error Correction Is the Bottleneck

Here's the physics problem in one sentence: qubits are noisy, and the noise compounds fast. Every gate you apply, every nanosecond that passes, the fragile quantum state drifts. The only known way to build a useful quantum computer is to encode logical qubits across many physical qubits and continuously measure "syndromes" — clues about which errors occurred without destroying the state itself — then use a classical algorithm to decode what actually went wrong and apply corrections.

Traditional surface-code decoders like minimum-weight perfect matching (MWPM) and union-find have been the standard for years. They are fast, but they make simplifying assumptions about noise that break down on real hardware — where errors are correlated, biased, and deeply hardware-specific.

A neural network decoder can learn the actual noise model of a specific QPU. That is what NVIDIA's 3x accuracy claim is pointing at: not a theoretical improvement against a toy noise model, but a real gain on real hardware-specific error distributions.

How to Use It

Ising is distributed through three channels: GitHub, Hugging Face, and build.nvidia.com. The training framework is on GitHub at NVIDIA/Ising-Decoding, and the pre-trained models sit in the NVIDIA Ising collection on Hugging Face.

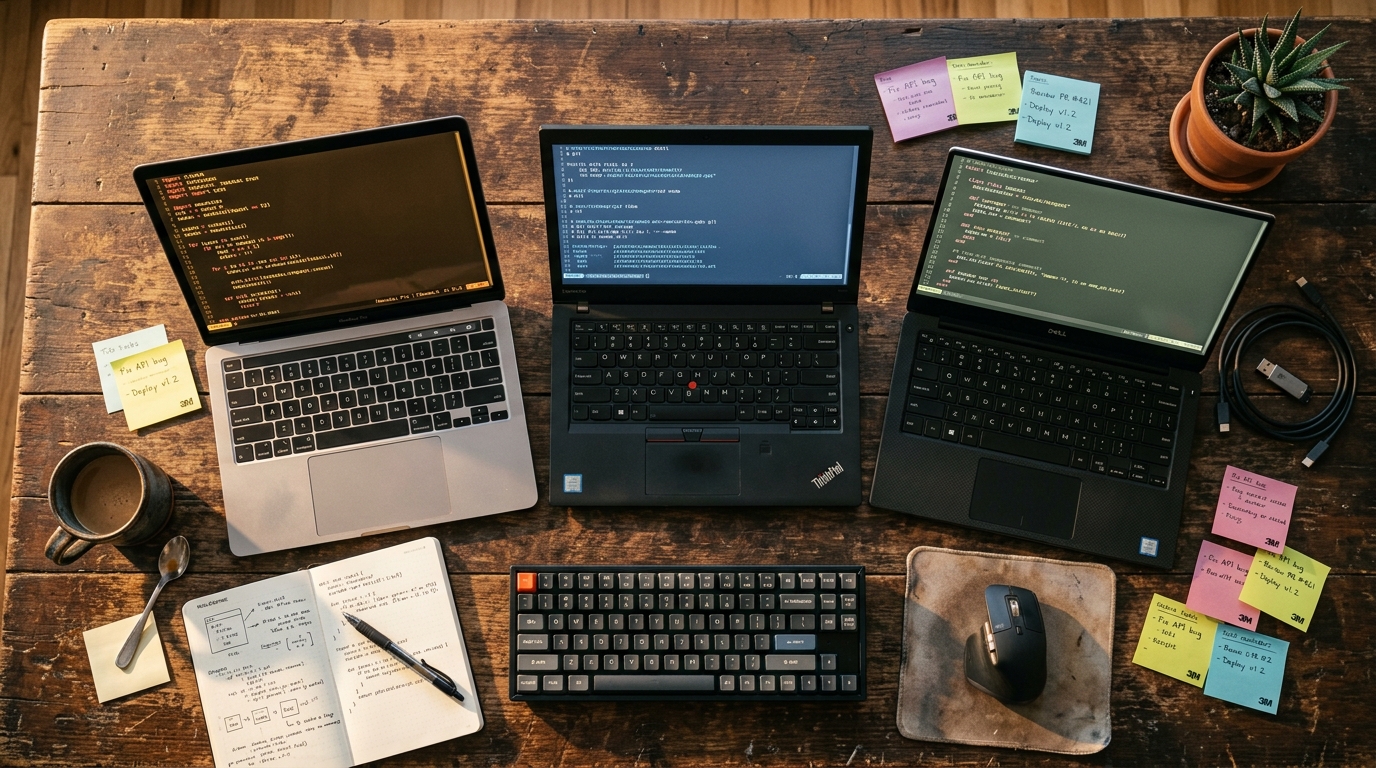

To retrain a decoder on your own hardware's noise model, the workflow is:

- Define your noise model and surface-code orientation

- Point the framework at a target model depth

- Let the

cuStabilizerlibrary inside NVIDIA cuQuantum generate synthetic training data - Train the 3D CNN in PyTorch

The library integrates with NVIDIA's CUDA-Q quantum software platform and the NVQLink QPU-GPU interconnect — the pipes that move syndrome data off the QPU and into a GPU for decoding at the speeds real-time error correction demands.

Who's Already Using It

The early adopter list reads like a who's-who of serious quantum research: Academia Sinica, Fermi National Accelerator Laboratory, Harvard's John A. Paulson School of Engineering and Applied Sciences, Infleqtion, IQM Quantum Computers, Lawrence Berkeley National Laboratory's Advanced Quantum Testbed, and the UK National Physical Laboratory (NPL).

That is not a set of hobbyists. Those are the labs building the machines that will — or won't — deliver on quantum computing's promise. If Ising becomes the default decoder stack across that group, it becomes a de facto standard the way PyTorch quietly became the default in machine learning.

The Strategic Read

NVIDIA doesn't make quantum processors. It sells GPUs, interconnects, and software that sit next to whoever does. Open-sourcing Ising is a classic platform play: give the hard parts away, make sure the hard parts run best on your silicon, and harvest the margin on the hardware everyone now needs.

There is a harder-nosed version of this argument, too. Quantum computing has been marketed as the thing that will displace classical accelerators. By embedding itself as the AI layer inside every serious quantum stack, NVIDIA turns that threat into a partnership. Every qubit that gets closer to useful makes someone buy more H100s and Blackwells to run the decoder next to it.

What to Watch

A few open questions will determine whether Ising is a real inflection point or a strong marketing launch:

- Third-party replication. The 2.5x / 3x numbers are NVIDIA's. Independent benchmarks from labs not already on the early-adopter list will matter.

- Noise-model portability. The decoders are trained on synthetic data from a user-defined noise model. How much does performance degrade when the actual QPU noise drifts away from that model over a multi-hour run?

- Calibration-VLM generalization. A 35B-parameter vision-language model reading oscilloscope-style measurements is a novel use of a VLM. Whether it transfers across QPU architectures — superconducting, trapped-ion, neutral-atom — is an empirical question.

The Bottom Line

Quantum computing has been a decade away for three decades. What changes the timeline is not a bigger chip — it is reliable error correction at speeds humans cannot hand-tune. NVIDIA Ising is a credible, open, benchmarked attempt at exactly that layer, and it ships with the ecosystem integration (CUDA-Q, NVQLink, PyTorch) to actually get used. If you run a quantum lab, it is the first thing to try this quarter. If you run a quantum startup, the competitive calculus just shifted under your feet.