AI's appetite for electricity has become an industry-wide crisis. Data centers consumed approximately 415 terawatt-hours of electricity globally in 2024 according to the International Energy Agency — about 1.5% of total global electricity use — and that figure is projected to double to 945 TWh by 2030. Every new frontier model compounds the problem. But a team at Tufts University just demonstrated that the solution might not require better hardware — it requires better thinking.

Led by professor Matthias Scheutz, the Karol Family Applied Technology Professor at Tufts' School of Engineering, the research team has built a neuro-symbolic AI system that slashes energy consumption by up to 100x while simultaneously tripling task accuracy. The results will be presented at the International Conference of Robotics and Automation (ICRA) in Vienna this May.

The Problem With Brute Force

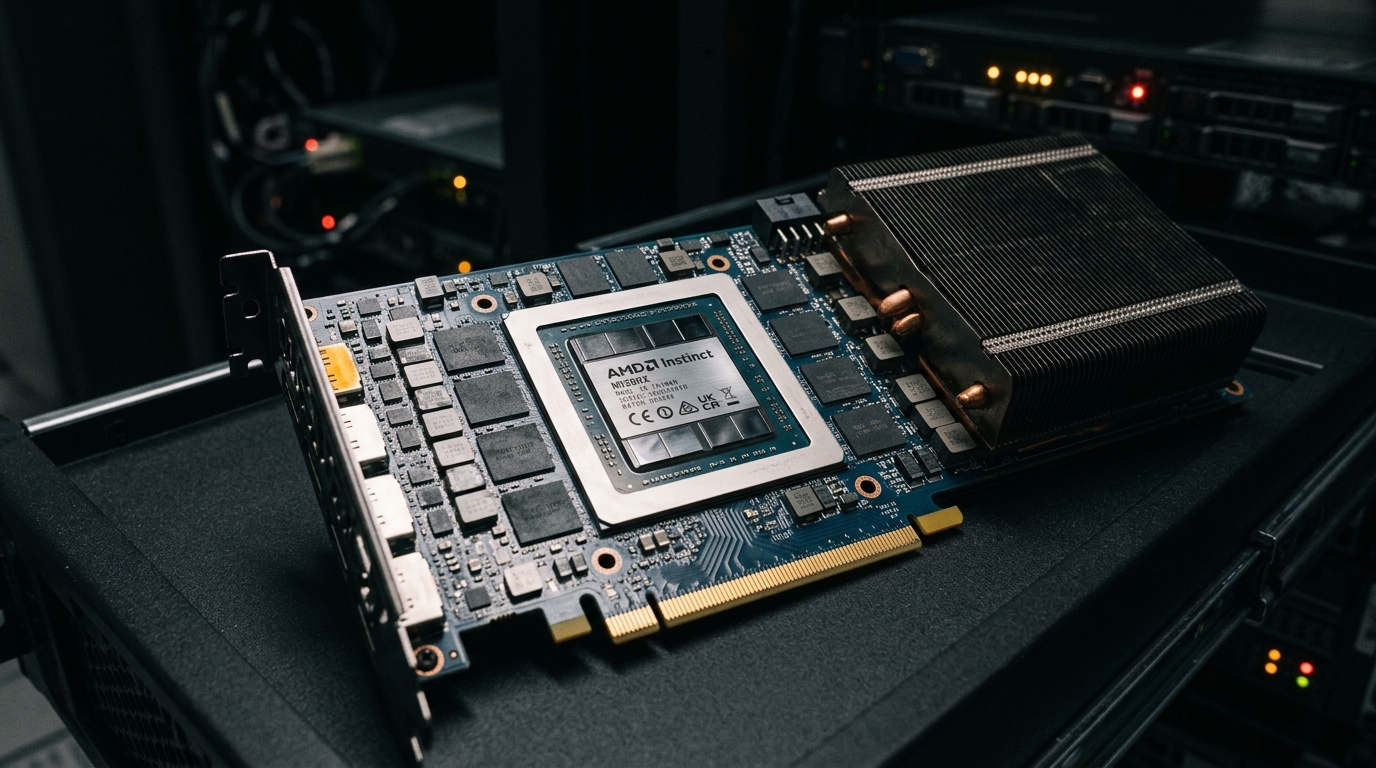

Today's most capable AI systems — particularly vision-language-action (VLA) models used in robotics — learn through massive trial and error. Feed them millions of examples, burn through thousands of GPU hours, and eventually they pick up patterns. It works, but the energy cost is staggering.

Training a single VLA model can take over 36 hours on high-end hardware. And the accuracy? On tasks requiring multi-step logical reasoning, standard VLA models manage a success rate of just 34%. That's a lot of electricity for a system that fails two-thirds of the time.

What Neuro-Symbolic AI Actually Does

The Tufts approach combines two paradigms that have historically been treated as competitors: neural networks and symbolic reasoning.

Neural networks excel at perception — recognizing objects, interpreting language, processing sensory input. Symbolic reasoning excels at logic — applying rules, planning sequences, understanding cause and effect. By fusing them, the system gets the best of both.

In practice, the neuro-symbolic model uses its neural components to see and understand the world, then hands off to symbolic reasoning to plan and act. This means the system doesn't need to brute-force its way through every possible action sequence. It applies logical constraints that dramatically narrow the search space.

As Scheutz's team describes it, the system can "apply rules that limit the amount of trial and error during learning." Fewer wrong paths explored means less compute burned.

The Numbers That Matter

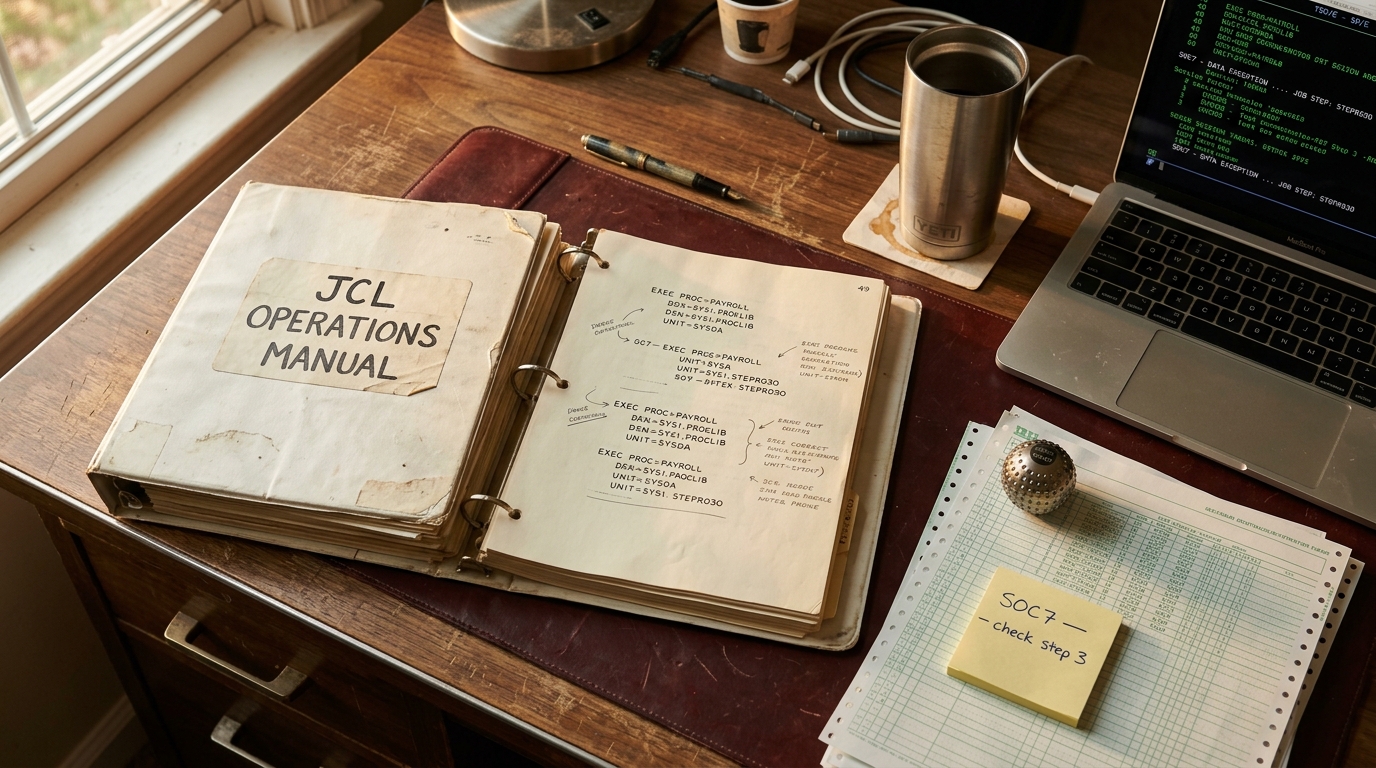

The research team — including Timothy Duggan, Pierrick Lorang, and Hong Lu — tested their approach on the classic Tower of Hanoi puzzle, a benchmark that requires precise multi-step planning. The results are striking:

| Metric | Standard VLA | Neuro-Symbolic VLA |

|---|---|---|

| Success rate | 34% | 95% |

| Unseen complex variants | 0% | 78% |

| Training time | 36+ hours | 34 minutes |

| Training energy | Baseline | 1% of baseline |

| Operational energy | Baseline | 5% of baseline |

The gap on unseen variants is the most revealing number. Standard models scored zero percent on novel configurations they hadn't been trained on. The neuro-symbolic model solved 78% of them. That's not incremental improvement — it's a fundamentally different kind of generalization.

Why Robotics Is the Right Testbed

VLA models sit at the intersection of AI's hardest problems: visual perception, language understanding, and physical action. A robot assembling components on a factory floor needs to see what's in front of it, understand verbal instructions, and execute precise movements — all in real time.

The energy requirements for these models have been a major barrier to deployment outside lab settings. If a system needs 36 hours of training and dedicated GPU clusters for every new task, it's economically viable only for the largest manufacturers. Cut that to 34 minutes at 1% of the energy cost, and suddenly small and mid-size operations can afford adaptive robotics.

The accuracy improvements matter just as much for safety-critical applications. A 34% success rate is a curiosity. A 95% success rate is a deployable system.

What This Doesn't Solve

It would be tempting to extrapolate these results to all of AI, but the researchers are careful about scope. Neuro-symbolic approaches work best on tasks with clear logical structure — sequential planning, rule-based constraints, hierarchical problem decomposition. That covers a lot of robotics and industrial automation.

Large language models generating free-form text or creative content operate in a fundamentally different domain where symbolic rules are harder to define. The energy savings for LLM training and inference will likely come from a different set of innovations — architectural efficiency, hardware improvements, or algorithmic breakthroughs in attention mechanisms.

That said, the principle is transferable: don't make the model learn everything from scratch when you can encode known constraints directly. The AI field's obsession with end-to-end learning has produced remarkable results, but it has also created systems that waste enormous compute rediscovering rules that humans already know.

The Bigger Picture

AI's energy crisis isn't hypothetical — it's already reshaping power grids. Microsoft, Google, and Amazon have signed deals to restart or build nuclear reactors specifically to power data centers. The IEA projects AI energy demand could double or triple by 2030.

Neuro-symbolic AI won't single-handedly solve this problem, but the Tufts research demonstrates that architectural innovation can deliver order-of-magnitude improvements where hardware scaling delivers incremental gains. A 100x reduction in training energy isn't a rounding error — it's the difference between AI that only the largest companies can afford to train and AI that's accessible to research labs, universities, and smaller companies worldwide.

The Bottom Line

The Tufts team's neuro-symbolic approach turns AI's most wasteful habit — brute-force trial and error — into structured reasoning that uses a fraction of the energy while dramatically outperforming conventional methods. With ICRA in Vienna just weeks away, expect the robotics community to pay close attention. If these results replicate at scale, they won't just save electricity. They'll democratize a class of AI that has been locked behind prohibitive compute costs.