NVIDIA GR00T N1.7: The Open Robot Brain Trained on 20,854 Hours of Human Video

NVIDIA quietly shipped one of the most important open-weight model releases of the year on April 17, 2026 — and it isn't another LLM. It's the first humanoid robot foundation model with commercial licensing and a measurable scaling law for dexterity.

NVIDIA Isaac GR00T N1.7 is a 3B-parameter open Vision-Language-Action (VLA) model that maps a camera view and a natural-language instruction into continuous motor commands at roughly 27 Hz on an RTX 5090. It is a drop-in replacement for GR00T N1.6, and unlike the research-only checkpoints that came before it, you can deploy it on a factory floor.

The Action Cascade architecture

GR00T N1.7 uses a dual-system design that mirrors how cognitive scientists describe human motor control — slow deliberate reasoning sitting on top of fast reactive control.

- System 2 — Vision-Language Model. A

Cosmos-Reason2-2Bbackbone (Qwen3-VL architecture) ingests RGB frames at native aspect ratio plus a language instruction, then emits high-level action tokens. This is where multi-step task decomposition happens. - System 1 — Diffusion Transformer. A 32-layer DiT (twice the depth of N1.5's 16-layer head) takes those action tokens plus live proprioceptive state — joint positions, velocities, end-effector poses — and denoises them into continuous motor commands.

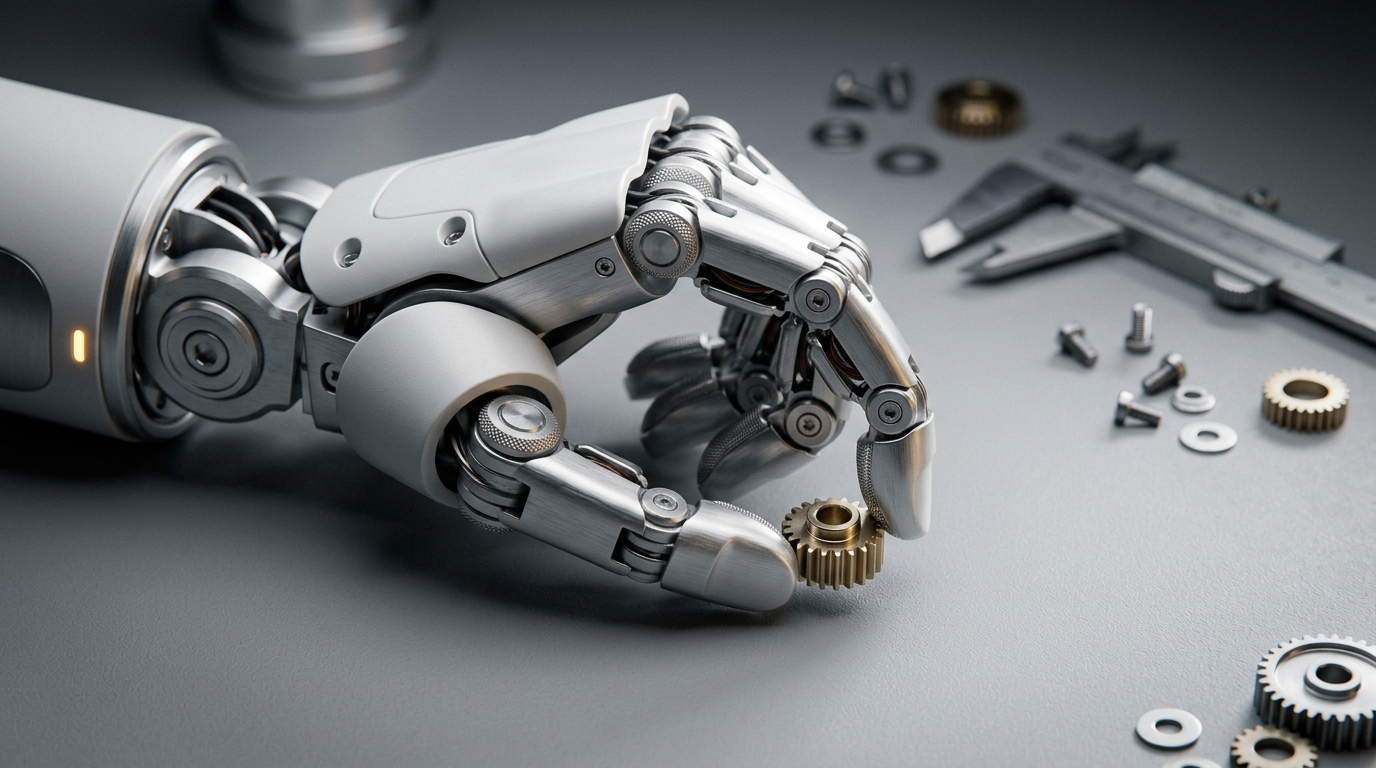

The model outputs action vectors mapped to the robot's degrees of freedom, including the 22-DoF hand control that previous generalist models couldn't reliably hit.

EgoScale: trained on humans, deployed on robots

The most important thing about N1.7 isn't the architecture — it's the data. NVIDIA pre-trained the model on 20,854 hours of human egocentric video spanning more than 20 task categories, from manufacturing and retail to healthcare and home environments.

That's an order of magnitude more than the few thousand hours of robot teleoperation data used for N1.6.

"Humans and robots share similar embodiments — two hands, a first-person viewpoint, a world full of objects to manipulate. Training on sensorized human video gives the model rich manipulation priors without requiring every behavior to be demonstrated on a physical robot first." — NVIDIA Research

The bet: teleoperation doesn't scale. You can't have a million people manually puppeting humanoids for a million hours. But you can collect human-perspective video at internet scale.

The first dexterity scaling law

This is the headline scientific contribution. NVIDIA reports that more egocentric human data produces predictable, consistent improvements in dexterous manipulation — going from 1k to 20k hours of human video more than doubles average task completion on contact-rich tasks.

That's a scaling law in the same family as the ones that made LLMs work. For the first time, robot dexterity has a clear data axis you can throw compute and recordings at and watch the curve climb.

The practical implication: 22 DoF hands can now perform contact-rich tasks like small parts assembly that generalist robot models have historically failed at. NVIDIA validated this on Unitree G1 humanoids, the bimanual YAM manipulator, and AGIBot Genie 1.

Real install, real inference

The repo lives at github.com/NVIDIA/Isaac-GR00T under an Apache-2.0 license. Setup uses uv for dependency management:

git clone --recurse-submodules https://github.com/NVIDIA/Isaac-GR00T

cd Isaac-GR00T

bash scripts/deployment/dgpu/install_deps.sh

source .venv/bin/activate

Spin up a policy server:

uv run python gr00t/eval/run_gr00t_server.py \

--embodiment-tag GR1 \

--model-path nvidia/GR00T-N1.7

Then drive your environment with the client:

from gr00t.policy.server_client import PolicyClient

policy = PolicyClient(host="localhost", port=5555)

obs, info = env.reset()

action, info = policy.get_action(obs)

obs, reward, done, truncated, info = env.step(action)

Inference performance for the prior-generation N1.6-3B (4 denoising steps, single camera view) lands at:

| Device | E2E Latency | Frequency |

|---|---|---|

| RTX 5090 | 37 ms | 27.3 Hz |

| H100 | 38 ms | 26.3 Hz |

| RTX 4090 | 44 ms | 22.8 Hz |

| Jetson Thor | 105 ms | 9.5 Hz |

| DGX Spark | 89 ms | 11.2 Hz |

Inference requires 16 GB+ VRAM; fine-tuning wants 40 GB+. N1.7 supports the same Ampere → Blackwell + Jetson hardware footprint.

Commercial licensing changes the calculus

This is the unsexy detail that matters most for industry. GR00T N1.7 is commercially licensed in early access — meaning robotics companies can deploy it inside paid products without the licensing landmines that come with research-only checkpoints.

For factories, that's the unlock. Material handling, packaging, inspection — these are repetitive contact-rich tasks where a 27 Hz policy with reasonable dexterity can replace bespoke hand-coded behaviors. Companies like Franka Robotics, NEURA, and Humanoid are already running GR00T-enabled workflows.

Upgrading from N1.6

NVIDIA's documentation calls it a drop-in swap: point --model-path to nvidia/GR00T-N1.7 and your existing embodiment configs and modality configurations carry over. The architectural changes — the new VLM backbone and the EgoScale pre-training — show up as better out-of-the-box dexterity before any fine-tuning.

If you're still on N1.5, you'll want to migrate the checkpoint format and update to the new VLM-coupled action chunks. The n1.5-release branch is preserved if you need to keep the old workflow alive.

What's missing

Early access means the licensing terms vary by use case — production deployments may need to contact NVIDIA. There's no published comparison against closed proprietary VLAs from Figure AI or 1X. And the dexterity scaling law is reported on internal benchmarks; independent reproduction will take time.

Inference at 9–11 Hz on edge hardware (Thor, Spark) is also borderline for high-frequency manipulation. Production deployments will want H100-class GPUs in the loop, which has cost and physical-footprint implications most factory cells aren't built for.

The Bottom Line

GR00T N1.7 is the first humanoid robot foundation model that (a) has a measurable scaling law, (b) can be deployed commercially, and (c) ships as open weights you can fine-tune on your own embodiment. That combination didn't exist a year ago.

The bigger story is how it was trained. If 20,854 hours of human video genuinely scales robot dexterity the way internet text scaled LLM reasoning, the bottleneck on humanoid robotics shifts from teleoperation studios to camera rigs — and that's a much easier supply problem to solve.