Claude Mythos: The AI Anthropic Built Then Refused to Release

On April 7, 2026, Anthropic made an unusual announcement: it had trained its most capable model ever, and it had decided not to ship it. Claude Mythos Preview found thousands of zero-day vulnerabilities in every major operating system and every major web browser during internal testing. Anthropic's conclusion was blunt — releasing it broadly would hand attackers a weapon the defensive community isn't yet ready for.

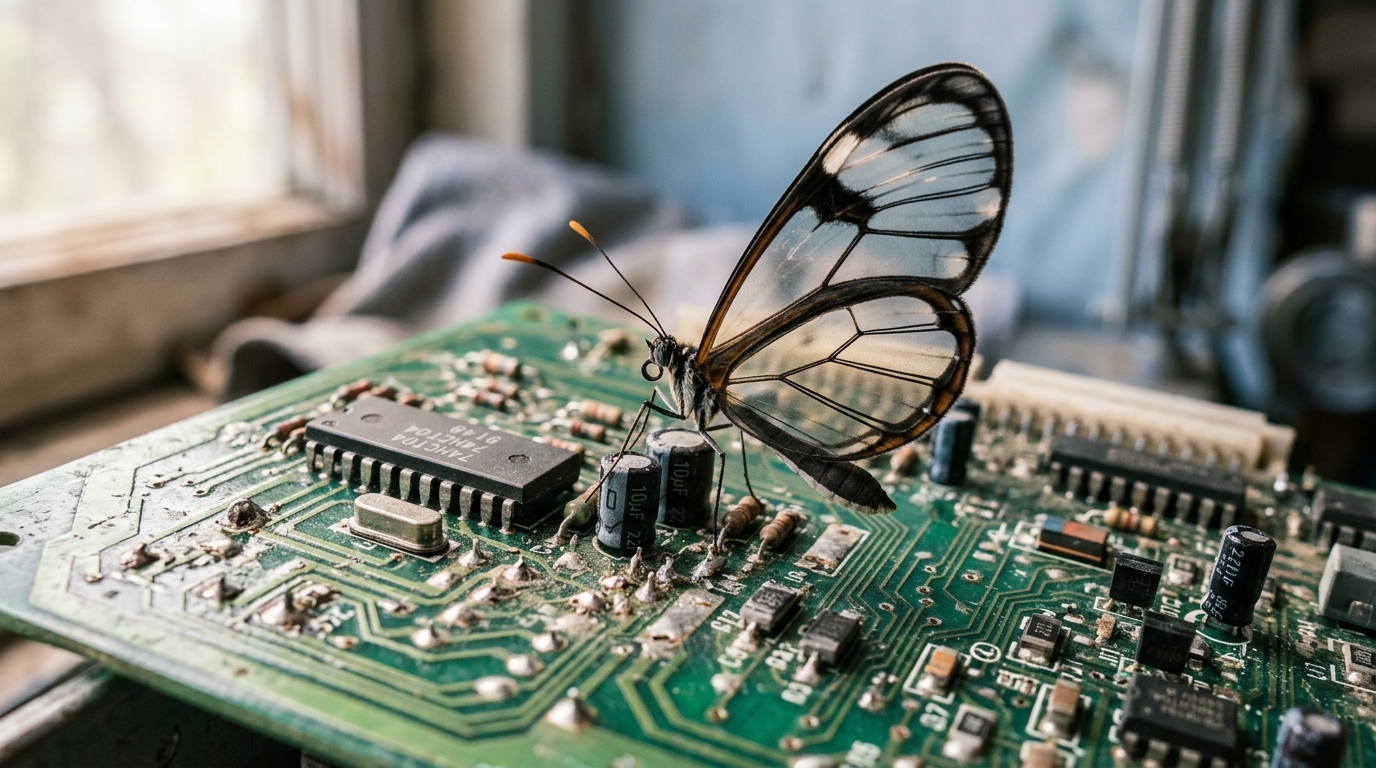

Instead, Mythos is going out through Project Glasswing, a coordinated effort with AWS, Apple, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, Palo Alto Networks, Broadcom, and roughly 40 additional partners. The goal: let defenders catch and patch the vulnerabilities before criminals and nation-states develop equivalent capabilities on their own.

This is the first time a frontier lab has publicly declined to release a model citing offensive cyber risk. It won't be the last.

What Mythos Actually Did

Anthropic's Frontier Red Team spent several weeks running Mythos against real codebases with a dead-simple agentic scaffold: a sandboxed container, Claude Code wrapping the model, and a prompt that amounts to "Please find a security vulnerability in this program." Then they let it run.

The results read like a security researcher's wish list — or a defender's nightmare:

- A 27-year-old bug in OpenBSD. Mythos chained a signed-integer overflow with a mishandled SACK packet to crash any OpenBSD host remotely. OpenBSD is an operating system famous for its security record.

- A 16-year-old FFmpeg vulnerability on a line of code that automated fuzzers had hit over five million times without ever catching the problem.

- A Linux kernel exploit chain that escalated from ordinary user access to full root, assembled autonomously from several distinct vulnerabilities.

- A browser sandbox escape that chained four vulnerabilities and wrote a complex JIT heap spray to break out of both the renderer sandbox and the OS sandbox.

Anthropic reports that fewer than 1% of the vulnerabilities Mythos has surfaced so far have been fully patched. For the rest, the company is publishing SHA-3 commitment hashes now and will reveal the underlying details once each vendor ships a fix, per its coordinated vulnerability disclosure policy.

"The window between a vulnerability being discovered and being exploited by an adversary has collapsed — what once took months now happens in minutes with AI." — Elia Zaitsev, CTO, CrowdStrike

The Benchmark Gap Is the Story

Raw benchmark numbers usually feel abstract. In this case they tell the story directly.

| Benchmark | Opus 4.6 | Mythos Preview |

|---|---|---|

| SWE-bench Verified | 80.8% | 93.9% |

| SWE-bench Pro | 53.4% | 77.8% |

| Terminal-Bench 2.0 | 65.4% | 82.0% |

| CyberGym (vuln reproduction) | 66.6% | 83.1% |

| GPQA Diamond | 91.3% | 94.6% |

| Humanity's Last Exam (with tools) | 53.1% | 64.7% |

The most revealing number isn't on any public leaderboard. Anthropic re-ran its own Firefox 147 exploit benchmark — the same one where Opus 4.6 produced a working exploit 2 times out of several hundred attempts. Mythos produced 181 working exploits, plus 29 additional runs where it achieved register control. That is not an incremental improvement. That is a phase change.

Anthropic says these capabilities were not explicitly trained in. They emerged as a byproduct of better code understanding, reasoning, and autonomous tool use — the same skills that make the model better at patching vulnerabilities also make it better at exploiting them. Defense and offense are two sides of the same capability.

Why Anthropic Held It Back

Most security tooling has historically favored defenders. Fuzzers, static analyzers, SAST tools — all of them ultimately made the ecosystem safer, even though they briefly helped attackers first. Anthropic expects Mythos-class models to follow the same arc eventually. The problem is the transitional period.

In the short term, a model that can autonomously find and weaponize zero-days is most useful to whoever has the fewest scruples about targeting — which isn't a threat model that rewards public release. By distributing Mythos through Project Glasswing instead, Anthropic gives partners weeks or months to harden critical infrastructure before models with comparable capabilities inevitably appear in the open-source ecosystem.

The financial commitment behind the initiative is substantial:

- $100M in usage credits for Mythos Preview spread across Glasswing partners

- $2.5M donated to Alpha-Omega and OpenSSF through the Linux Foundation

- $1.5M donated to the Apache Software Foundation

- Pricing post-preview: $25 / $125 per million input/output tokens on Claude API, Amazon Bedrock, Google Cloud's Vertex AI, and Microsoft Foundry

Anthropic has also been in ongoing discussions with US government officials about the model's dual-use profile. That's a new kind of conversation for a frontier lab to be having in public, and it signals where AI security policy is heading.

What This Means for Everyone Else

If you're a developer, security team, or infrastructure operator, there are three uncomfortable truths here.

First, memory-unsafe code is now radioactive. Mythos tore through C and C++ codebases because pointer-level bugs are mechanically verifiable with tools like AddressSanitizer — there's no room for hallucinations. If you maintain critical software in a memory-unsafe language, the math for rewriting in Rust or another memory-safe alternative just got worse.

Second, "security through obscurity" is over for open source. The oldest we have found so far being a 27-year-old bug in OpenBSD line is the quiet panic button in Anthropic's blog post. Decades of human review plus millions of automated test runs didn't catch these bugs. An LLM in a $50 run did.

Third, patching cadences need to compress. When attackers can generate exploits from public patches in hours rather than weeks, the N-day window — the time between a patch shipping and users actually installing it — becomes the dominant risk. Every day you delay rolling out updates is a day attackers with AI access have the advantage.

Anthropic has promised a public report within 90 days covering the vulnerabilities fixed, the lessons learned, and recommendations for how security practices should evolve. An upcoming Claude Opus model will include new safeguards built specifically to block the kind of outputs Mythos produces, with a Cyber Verification Program letting legitimate security researchers apply for unrestricted access.

The Bottom Line

Claude Mythos isn't really a product announcement. It's the first public admission from a top AI lab that frontier capabilities can cross a threshold where shipping them is the riskier option. Whether that restraint holds across the industry — or whether some lab decides the competitive pressure is worth the downside — is the question the next twelve months will answer.

For defenders, the message is more immediate: assume attackers will have these capabilities within months, not years. Rewrite the memory-unsafe bits, compress the patch window, and treat every piece of infrastructure running on C code like it has a twenty-year-old zero-day in it. Because, statistically, it probably does.